Intelligent robotics

The intelligent robotics program aims to provide robot solutions for diverse fields including manufacturing, agriculture, health care and civil engineering.

Led by Associate Professor Mats Isaksson, this research program leverages Swinburne’s technology expertise in robotics, mechatronics, automatic control, computer vision, artificial intelligence and Industry 4.0 to develop purpose-built robots and automation solutions for a wide range of applications.

Our research streams

There are five research streams within this program:

Field robotics

The aim of this stream is to develop purpose-built robots for unstructured environments, targeting applications in mining, farming and construction. The requirements for these applications typically involve both advanced robotics and mechatronics in addition to novel solutions for autonomy and control.

Projects

In partnership with Telstra, we’re developing a network of mobile robots controlled over a 5G link in near real time. This project is investigating:

typical characteristics of 5G (extremely low latency, high reliability and fast throughput)

the industrial applications of real-time control and automation enabled by 5G

The mobile robots we’re working with can accomplish tasks such as parts delivery, product inspection and collaboration with human operators or other robots during the manufacturing process.

We’ve developed prototypes of AUV that take propeller-type and fish-like forms. These vehicles have potential applications in marine biology projects, dive site surveys, boat inspections and underwater exploration. Our major goal is to develop a real-time localisation system and autonomous navigation controller, so that the AUV can follow a specified path. Our research is aimed at seafloor mapping and sea wave-induced vibration data.

Prototype of an Autonomous Underwater Vehicle.

We’re developing mobile robotic systems to operate in unstructured environments, specifically orchard, agricultural and forestry environments.

The system features onboard sensors (including an inertial measurement unit and computer vision), superior mobility, agility and intelligence, and can be automated to undertake a variety of operations, such as assisting orchard workers, transporting, spraying, weeding, harvesting, data collection and environmental management.

Our research covers these topics:

novel locomotion and mobility of ground vehicle systems

modelling and disturbance-free proximity control of miniature aerial vehicles for environment and infrastructure monitoring and management

deep learning pattern recognition for robotic guidance in natural environments

error modelling and quantification of imaging systems to improve localisation

multi-sensor data fusion for accurate localisation and positioning

multi-agent cooperative control for agricultural and post-farm gate logistics

In partnership with Darkspede, we’re studying an efficient and safe way to complete a drone delivery task by a remote operator and further train those operators by simulating all delivery scenarios using virtual reality (VR) based on the data collected from real-world situations.

Using UAVs (aka drones) to deliver goods has already proven to be effective and efficient, and will gain more popularity in the near future. The major challenge of drone delivery is for the drone to handle complex situations created by abstract and uncontrolled environments on the way to their destination. One possible solution to this challenge is to introduce an operator to intervene whenever the drone requires assistance and help find an optimised route.

VR simulation has also been wildly used for operation training of autonomous vehicle driving. In this case, the data collected for the trainee is valuable in order to correct the navigation system for the delivery UAV by providing an optimised solution for UAV delivery by learning from a human operator to perform a correction process. The data can be inputted to the UAV learning system to further improve the transport stability and navigation accuracy.

Researcher using virtual reality to assist with UAV (drone) navigation.

Data Delivery Drone Presentation

Unity Simulation

Artificial intelligence and autonomy

In this research stream, we’re targeting autonomous mobility platforms, including field sensing and perception. We’re researching positioning and navigation solutions with a strong focus on machine learning, artificial intelligence, computer vision, multi-sensor data fusion and autonomous navigation, as well as control in unstructured and unknown environments. We’re also researching multi-agent control and swarm intelligence.

Projects

Real-time video-based passenger analytics in action.

In partnership with Transport for New South Wales and iMOVE CRC, we’re developing and evaluating real-time vision-based solutions for Automatic Passenger Counting (APC). Interest in APC systems is rapidly growing as public transport providers seek accurate and real-time estimates of occupancy on their services. Accuracy benchmarks using pre-existing data sets offer some guidance, but must be supported by extensive in-field trialling.

By leveraging state-of-the-art deep neural networks and visual tracking techniques, we’re exploring RGB image-based solutions, representing systems that may be deployable with existing CCTV cameras, as well as more novel sensor choices such as RGB+Depth solutions.

We’re evaluating our research methods in offline testing as well as in-field trials, including peak-passenger metropolitan bus services in Sydney. The research is focused on the use of low-cost, low-powered and easy to install solutions suitable for deployment on real passenger-facing public transport services.

In partnership with Bionic Vision Technologies, we’re exploring the adaptation of robotic vision techniques to establish a data-driven pipeline for enhancing wearable assistive technologies such as retinal implants.

Retinal implants, also known as bionic eyes, are the leading treatment for retinal diseases such as Retinitis Pigmentosa and Age-related Macular Degeneration. This implantable micro-electrode array can restore a sense of vision by electrically stimulating surviving retinal cells. The primary input to this process is video frames acquired from a head-mounted camera.

However, due to the highly restricted resolution of state-of-the-art implants (as low as 40 pixels), a significant down-sampling must be performed on the image frames captured. This has led us to consider computer vision and image enhancement algorithms to detect, extract and augment the signal passed to the implant in order to emphasise key features in the environment that are crucial to safe and independent visual guidance for the implant recipient.

In our research, we have explored the adaptation of robotic vision techniques, such as:

ground plane segmentation

human vision inspired vision algorithms for motion processing

state-of-the-art deep learning and deep reinforcement learning

We’ve established a data-driven pipeline for both learning and enhancing visual features of importance to high priority visuo-motor tasks, such as navigation and table-top object grasping. Through patient testing and simulated testing with virtual reality, we aim to develop and thoroughly evaluate vision processing solutions that improve functional outcomes, enhance independence and provide useful vision for patients implanted with these devices.

An example of vision processing and wearable assistive technologies in use.

In partnership with CSIRO and Excellerate Australia, we’re developing several multi-agent cloud robotic systems that can be used in environments, such as school classrooms or to provide first aid assistance for elderly people.

The introduction of cloud robotics has merged the two ever-progressing domains of robotics and cloud computing. The added feature of cloud implies less dependence on human input and more support from ubiquitous resources. Industry 4.0 is envisioned to be a key area for infusion of these robotic technologies, especially in automating applications such as sensing, actuating and monitoring via insurgence of cloud computing and wireless sensors. Particularly, smart factories located in remote locations with challenges to health, safety and environment are our motivation for robotic inspection, maintenance and repair.

Cloud-aided robots can complement the sensors with action-oriented task, such as inspection, fault diagnosis and sensor testing. The tasks associated with these robotic applications are usually interdependent, latency sensitive and compute intensive. In addition, the resources are heterogeneous in terms of processing capability and energy consumption. These smart factory applications require robots to continuously update intensive data in order to execute tasks in a coordinated manner. Therefore, real-time requirements need to be fulfilled by tackling resource constraints.

Given the context, the specific aim of our research team is to design real-time resource allocation schemes to improve resource sharing among robots and optimise task offloading to the cloud for multi-agent cloud robotic systems. We’ve already implemented evolutionary approaches to make offloading decision for a single robot factory maintenance application in a sample oil factory workspace and optimal resource allocation for the tasks of emergency management service in a smart factory.

The research group has also worked on several proof of concepts, including the development of a cloud-aided robot hardware, face recognition, voice-based control, environment mapping and path planning via cloud-supported robot. We are also supporting elderly people with first aid assistance using Turtlebot 2.0, as well as integrating robot teaching assistance in a school classroom.

An example of a multi-agent cloud robotic system.

Human-robot interaction

This stream aims to improve the quality of human-robot interaction, which is of growing importance as collaborative robots are used more and more in applications such as medical surgery, rehabilitation, entertainment and service. Our research covers topics such as collaborative robots (cobots), tele-robotics and task-oriented robot control.

Projects

Collaborative robots (cobots) are designed to work safely in close proximity to humans. The introduction of low-cost cobots has enabled new robot applications in multiple new domains including healthcare. However, programming a robot still requires expert knowledge and the real revolution in cobot use will happen first when teaching a robot to perform a new task is simplified.

Funded through the Australian Research Council Training Centre for Collaborative Robotics in Advanced Manufacturing, our research is focused on making cobots learn tasks from human demonstration.

Funded through the Australian Research Council Discovery Projects scheme, our research is focused on:

understanding the dynamic behaviours of robotic control systems in networked environments

developing a new theory for the analysis and design of networked robotic control subject to network-induced constraints

Robotic control is used in many industry applications to improve efficiency, productivity and accuracy. The development of digital technology, especially Industry 4.0, brings in more networked control systems that create new design challenges due to network-induced constraints such as delay, data packet dropouts and cyber attack.

We’re investigating smart algorithms for robotic and mechatronic systems subjected to nonlinearities, disturbances and uncertainties. The research includes modelling and pattern recognition, control and optimisation, sensoring and data processing.

To date, our developed algorithms have been used in electric vehicle steer by wire systems, anti-lock brake systems, flexible robotic joints, industrial drives, unmanned autonomous vehicles and more.

A collaborative robot (or cobot) is a robot which has been specifically designed to work closely with humans without the need for additional safety equipment or fencing. To achieve this, the cobot is typically equipped with a variety of force and position sensors that detect the presence of humans and objects in the workspace.

This suite of sensors provides an opportunity for the robot system to be re-designed to evolve from a “blind” tool to an intelligent machine that can develop a “sense” of its environment and self-monitor the outcomes or products it creates.

This project will develop methods by which the cobot will self-evaluate its performance of tasks and outcomes in real time using information gathered from how it perceives motions and actions. Ultimately, it would be able to evaluate its performance in much the same way that a skilled craftsperson (e.g. welder, woodworker, etc.) knows by experience that they have produced a good quality product.

As humans adapt and familiarise with their cobot teammates, emergent work patterns and behaviours are likely to evolve and be subject to change. Similarly, augmentations to the cobots workflow patterns may emerge from AI-driven adaptations to the human operator’s own changing patterns and behaviours, or simply due to upgrades to the system or changes to the underlying working conditions.

This presents the challenge of both balancing the need to maintain and monitor potentially stringent compliance requirements, while also allowing sufficient scope for the human-robot collaboration to adapt to the needs of the task and to establish efficient collaborative workflows. This project will explore strategies to account for such needs and keep process outputs within the bounds of compliance.

Medical and assistive robots

In this stream, we are researching novel robot solutions and devices for health care applications.

We believe that the introduction of low-cost, speed and power-limited collaborative robots are set to revolutionise this field. We’re also interested in the role of bionic robotic devices, bio-sensing, brain machine interface, and biomechanics modelling and control.

Projects

We have previously collaborated with Healthe Care, quantifying the ergonomic benefits to the surgeon when they’re using robot-assisted laparoscopic surgery (RALS) compared with traditional laparoscopic surgery.

Supported by CMR Surgical, we have recently started a new three-year project "A Quantitative Ergonomic Study of Robotic Surgery – Can Console Design Limit Injury and Aid Gender Equity in Surgery?" where we aim to find quantitative answers to these questions by using sensor fusion technologies, combining EMG and FMG measurement with motion capture data and advanced biomechanical modelling.

Robot-assisted laparoscopic surgery: an ergonomic investigation

In partnership with IR Robotics, we have developed a solution to automate photobiomodulation therapy of chronic pain. Integrating a thermal camera, a low-level infrared laser and a novel collaborative robot from ABB Robotics, our solution can automatically and safely perform photobiomodulation therapy on patients with soft tissue injury.

Building on Industry 4.0 technologies, the derived platform has the potential to self-adapt to provide treatments that are optimised to the individual.

A collaborative robot system for photobiomodulation of chronic pain

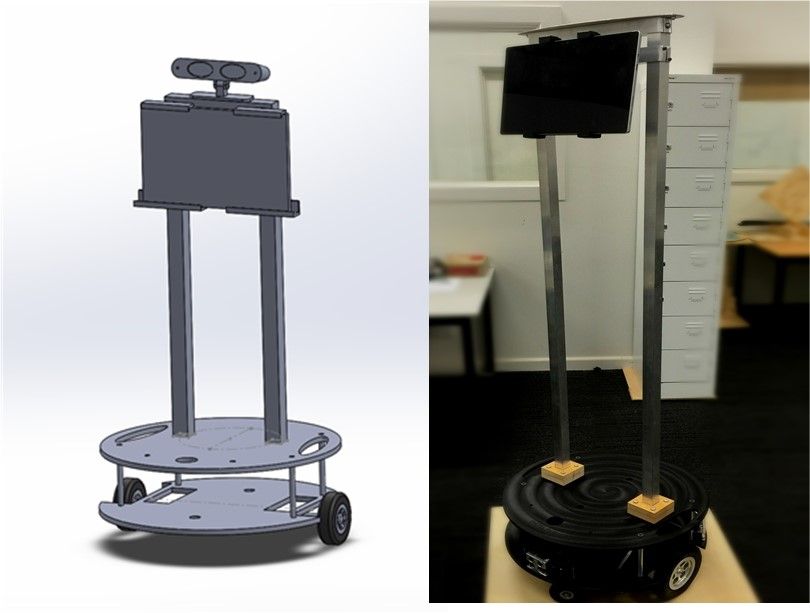

In partnership with the Baker Heart and Diabetes Institute, we’re exploring the use of teleoperation for medical diagnosis, specifically for heart ultrasounds in remote areas. Our project targets all aspects of robot-assisted remote ultrasound examinations.

Although medical advances are extending the life expectancy of the Australian population, access to health centres, hospitals and medical specialists can vary depending on where people live. Those in major cities or urban areas typically have excellent access to the relevant facilities and specialists; those in remote and rural areas are typically less fortunate.

One potential solution is the teleoperation of medical procedures. This technology is particularly important for a large, sparsely populated country like Australia. Our project utilises teleoperation for medical diagnosis, specifically for ultrasounds of the heart (an echocardiogram).

These use high-frequency sound waves to capture images of a heart, enabling the cardiographer to study the heart’s functions, such as the pumping strength, the working of the valves and to check if there are any tumours or infectious growth. An echocardiogram is a very important tool to diagnose heart conditions such as heart murmurs, enlarged heart and valve problems.

In partnership with The Royal Children’s Hospital (RCH) and The Brainary, we've been developing a socially assistive humanoid robot as a therapeutic aid for paediatric rehabilitation. The aims are to motivate young patients, increase exercise adherence and deliver rehabilitation care efficiently.

After three and half years of development and testing, the robot has engaged with over 50 patients, predominantly those with cerebral palsy, and is undergoing evaluation with therapists and parents within RCH’s rehabilitation clinic.

Adapting and deploying a general-purpose social robot as a therapeutic aid for diverse young patients with varying needs in a busy hospital setting comes with significant challenges. This is not typically addressed in traditional human-robot interaction or robotics research generally.

This project is thus exploring how state-of-the-art artificial intelligence and computer vision can be deployed long term in real-world clinical settings to augment therapy delivery and improve patient outcomes.

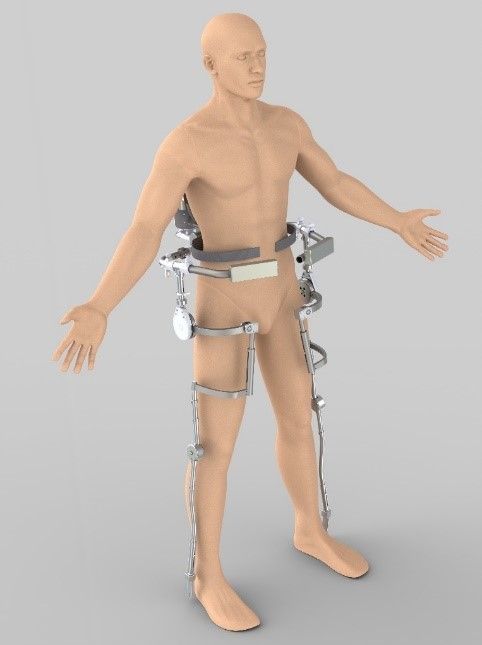

The rising cost of medical treatment and our rapidly aging population has necessitated the advent of cheaper alternative methods for rehabilitation therapy and assistive technology. The advancement of robotic technology has brought about the possibility of robotic alternatives to these concerns and will potentially bridge the current treatment gap and provide better assistive orthosis.

This project is designing and developing an intelligent rehabilitative and assistive orthotic device for lower limbs and upper limbs, intended to aid stroke victims and those with limited limb mobility due to weak muscles. Our research covers:

the design, modelling and control of artificial muscle and smart actuator

assist-as-needed adaptive control to support and reinforce natural movements of limbs through the range of motion

intent detection and feedback assistance control based on bio-sensing

bio-sensing and instrumentation for patient condition monitoring and diagnosis

An example of an assist-as-needed robotic rehabilitation device.

We’re working with groups from the City of Wyndham to find out what robots can contribute to the quality of life for older adults and adults living with dementia. Our team of interaction designers, psychologists and engineers are interested in people’s attitudes to, and relationships with, robots.

We’re exploring which characteristics increase acceptance and adoption in everyday life and therefore create benefits for the user, as well as researching and discussing ethical considerations and societal issues surrounding the role of robots.

Traditionally, a strong focus in robot development concerns the functional aspects of robots. The design aesthetics of robots and their outputs (speech, gestures and emotions) are often overlooked or neglected.

We’re interested in speech interactions, the visual appearance of robots and their ability to convey emotions, so we’re exploring the impact of improved speech interfaces and communication patterns of robots with users and how these support older adults to live independently at home for longer.

Repair and recycling robots

In this stream, we are researching novel robot solutions targeting repair and recycling applications.

Projects

Together with industrial partner Plastfix Pty Ltd and supported by the Innovative Manufacturing Cooperative Research Centre (IMCRC), we've developed a revolutionising solution for automatic repair of headlight housings. This is an excellent example of an Industry 4.0 solution – integrating 3D scanning, 3D printing and advanced robotics with the development of a novel material.

Repairbot

Repairbot was a collaboration between Plastfix, The Innovative Manufacturing CRC and Swinburne. It uses intelligent robotics, 3D printing, 3D scanning and the development of a novel polypropylene-like material to automate the repair of car headlight assemblies.

Together with Curbcycle Ltd and the University of Sydney, we are working on a novel approach to soft plastic recycling using existing infrastructure. Bags with soft plastic are put in the recycling bins and automatically extracted at the material recovery facilities using computer vision, machine learning, and robotics. The challenges include an extremely unstructured environment and fast moving conveyor belts.

Biofouling on submerged marine infrastructure and vehicles cause unavoidable biological growths over time, requiring maintenance to remove all obstructive material. Producing replacement parts with on-board 3D printing systems is currently being trialled by US naval industries and is seen to be a potential solution to critical small-scale reproduction of ship parts.

We have received funding from the Defence Science Institute to develop, optimise and quantitatively evaluate large-scale pellet-based robotic 3D printing of a novel biofouling material.

Contact the Manufacturing Futures Research Platform

If your organisation would like to collaborate with us to solve a complex problem, or you simply want to contact our team, get in touch by calling +61 3 9214 5177 or emailing MFRP@swinburne.edu.au.